Milestone Management Migration with SafeKit

Evidian SafeKit

Warning, these procedures are simple guidelines. Evidian is not an expert of Milestone migration procedures. They must be validated by Milestone support according your Milestone versions.

3 migration scenarios are considered:

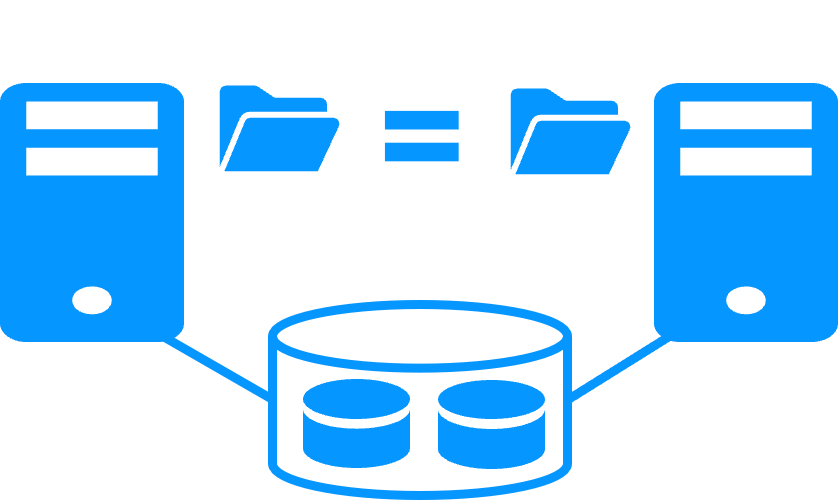

- 1. Management and SQL are in the same SafeKit cluster,

- 2. Management and SQL are in 2 different SafeKit clusters,

- 3. Management is in a SafeKit cluster with an external SQL not in a SafeKit cluster.

Note: here is described the Milestone Management solution with SafeKit for high availability and redundancy.

- Decide with the customer a service interruption to make the migration.

- Check in the Windows registry of both management nodes that the connection to the SQL databases is really local to each node. According this Milestone KB, the registry key for SQL connection is

HKEY_LOCAL_MACHINE\SOFTWARE\VideoOS\Server\ConnectionString. - Make a backup of the SQL databases on MGT+SQL1-PRIM for security. Default databases are according this Milestone KB: Surveillance, Surveillance_IDP, SurveillanceLogServerV2, Surveillance_IM.

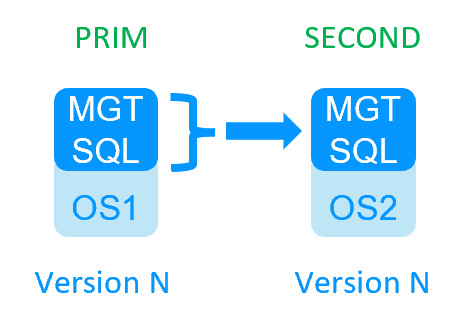

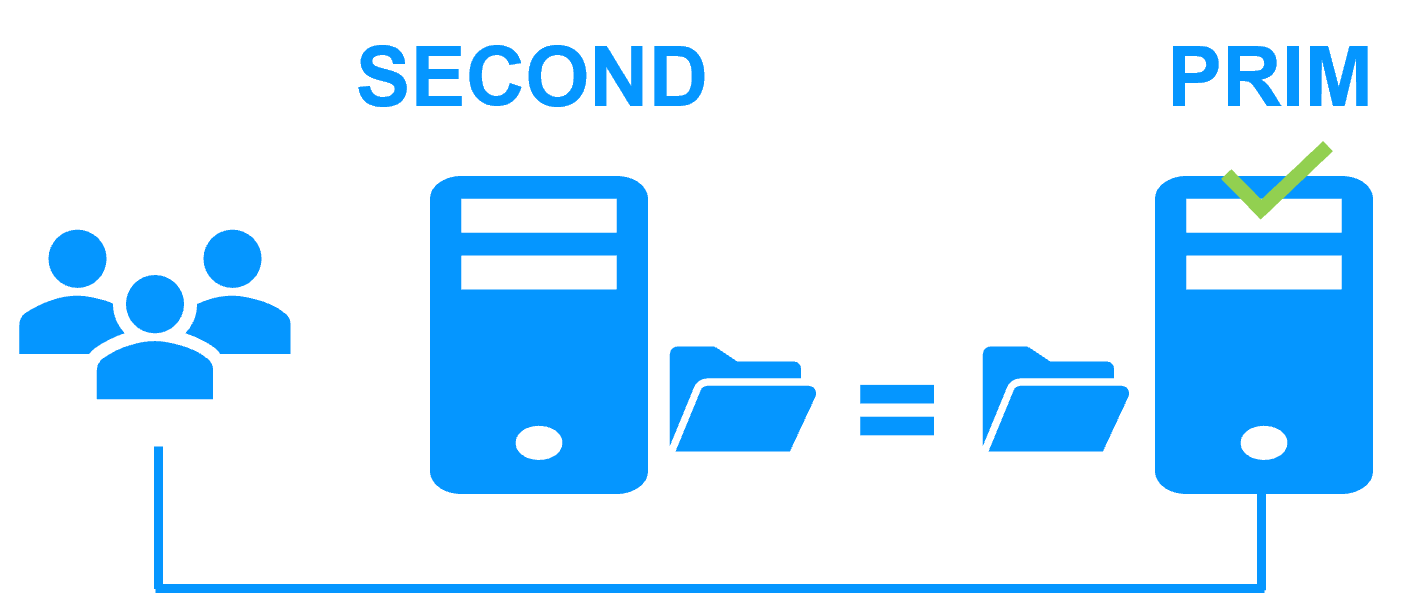

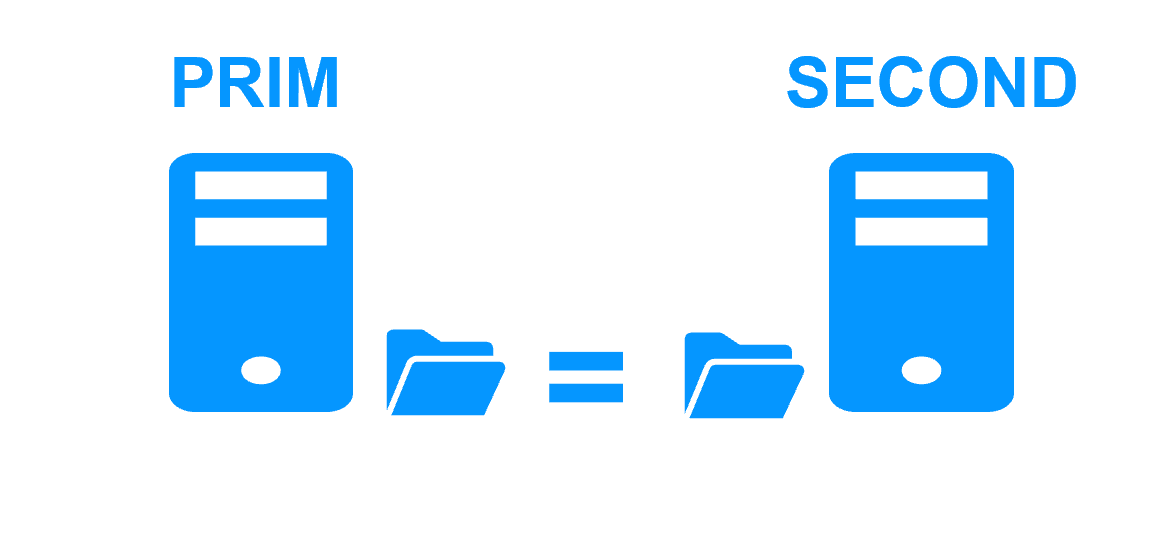

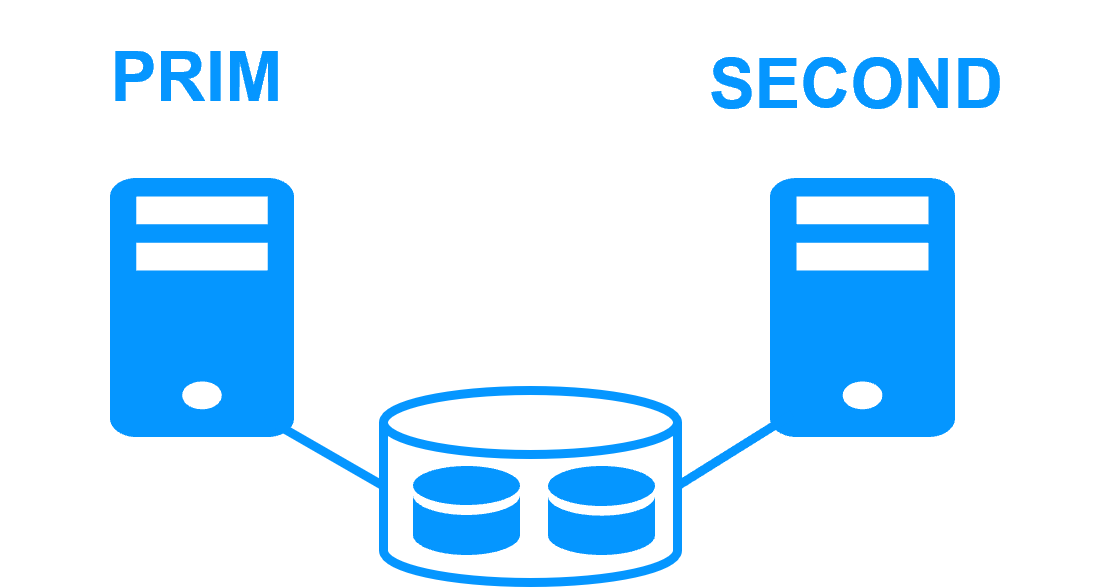

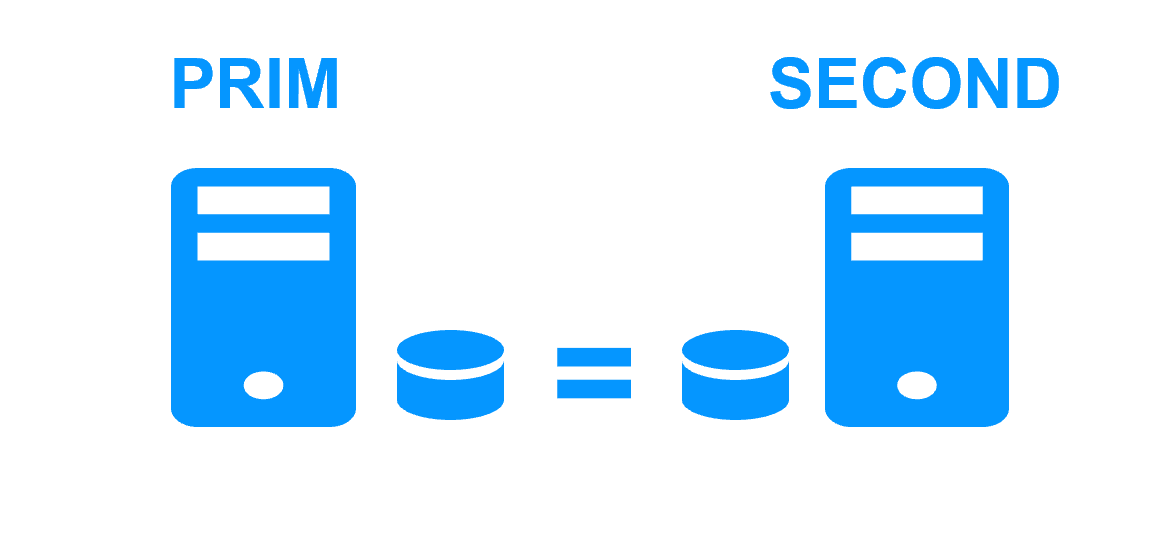

- Put the cluster in (MGT+SQL1-PRIM, MGT+SQL2-SECOND). Thus, the Milestone SQL databases are the same on both nodes (if they are correctly configured for replication in the SafeKit module).

- Protect the nodes from an automatic start of the SafeKit module at boot during the migration (if nodes are rebooted for any reason during the migration).

Use the SafeKit web console "Control tab/Admin/Configure boot start/Disable".

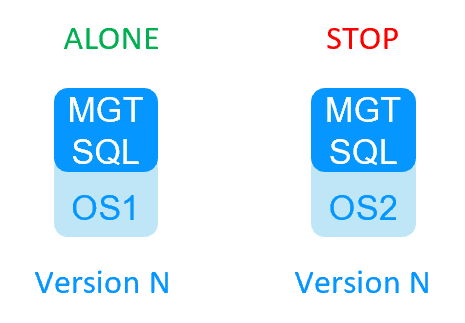

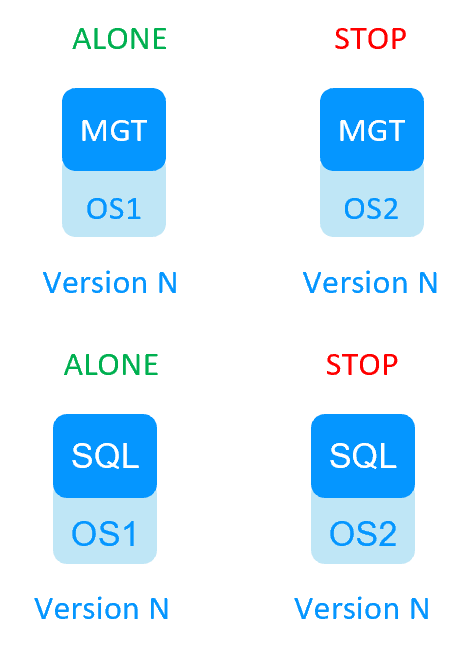

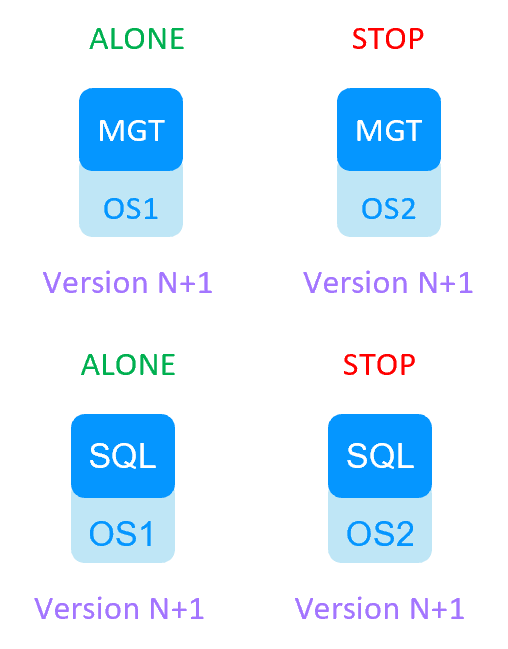

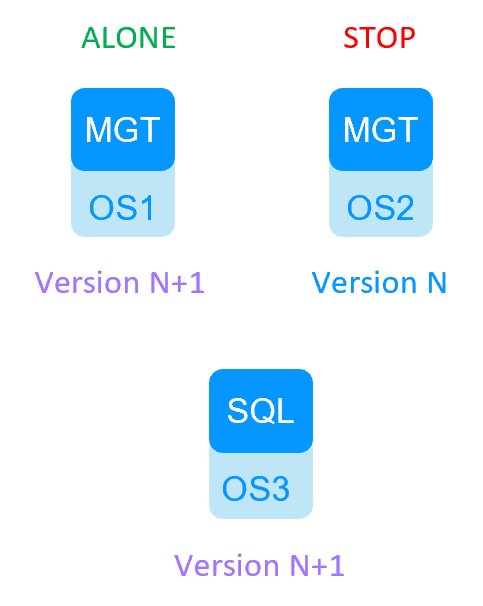

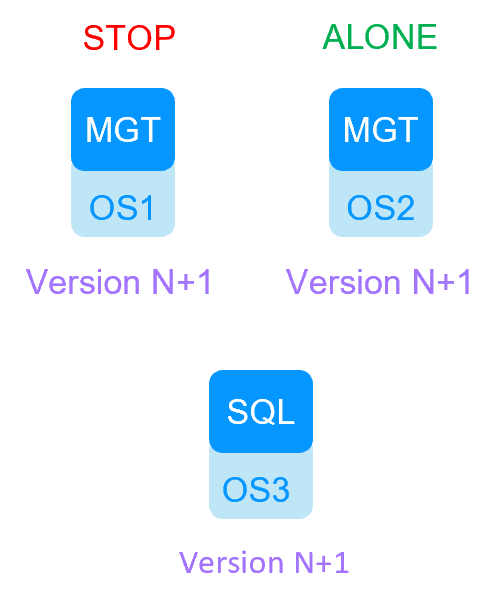

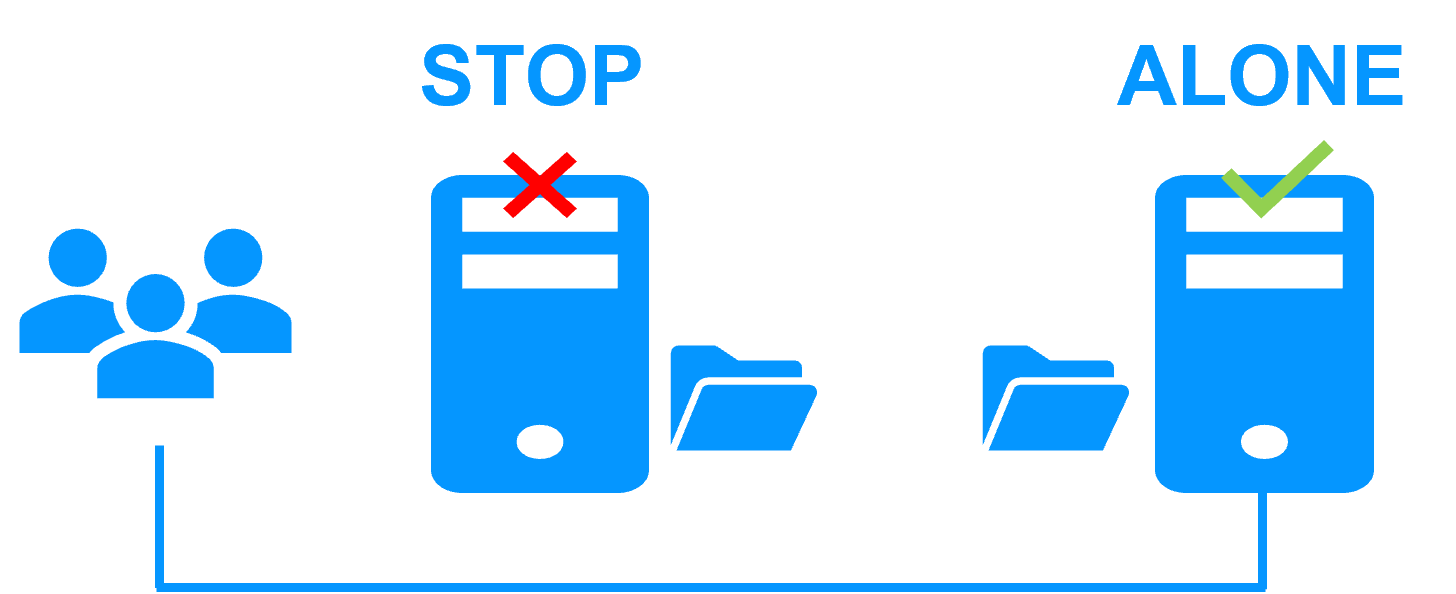

Do it for on both nodes in the console. - Put the cluster in (MGT+SQL1-ALONE, MGT+SQL2-STOP): there is no more replication from SQL1 to SQL2.

- Suspend the monitoring of processes during the migration on MGT+SQL1: use the SafeKit web console "Control tab/Admin/Errd suspend". Suspend also other checkers: "Control tab/Admin/Checker off".

- Migrate MGT+SQL1 from Milestone version N to Milestone version N+1 with migration of the databases on node 1.

Note: the migration requires that the management services are running, that’s why the migration is made in the ALONE state without SafeKit ckeckers. - Register the virtual IP of the SafeKit management cluster with the Server Configurator GUI.

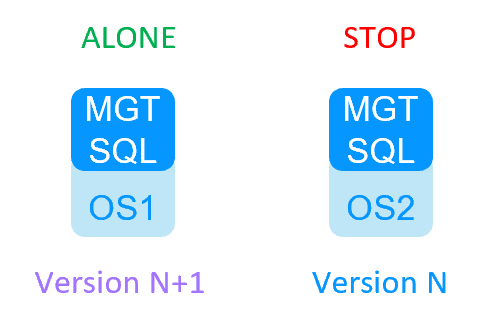

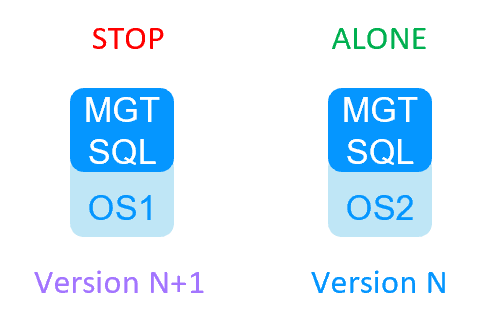

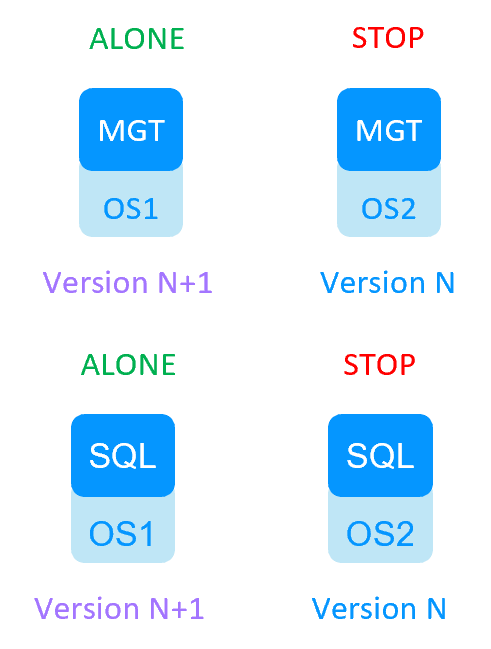

- At this step, MGT+SQL1 is migrated to Milestone version N+1 and MGT+SQL2 is in version N.

- DO NOT START MGT+SQL2 as SECOND during the previous steps else it will resynchronize the migrated databases from SQL1 (that’s why we remove the automatic start at boot and make a backup for security reasons).

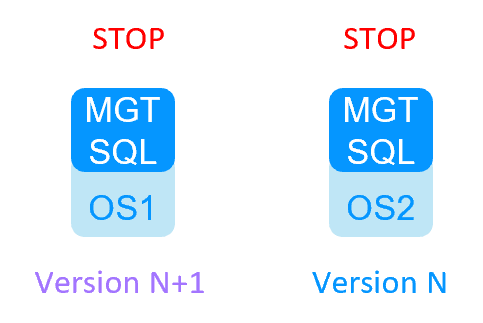

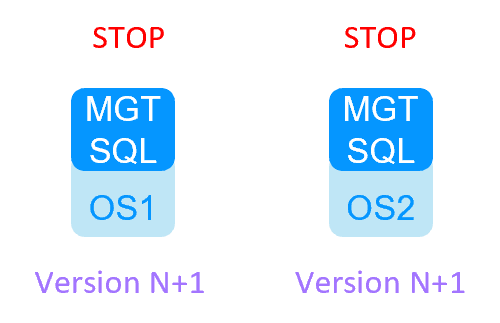

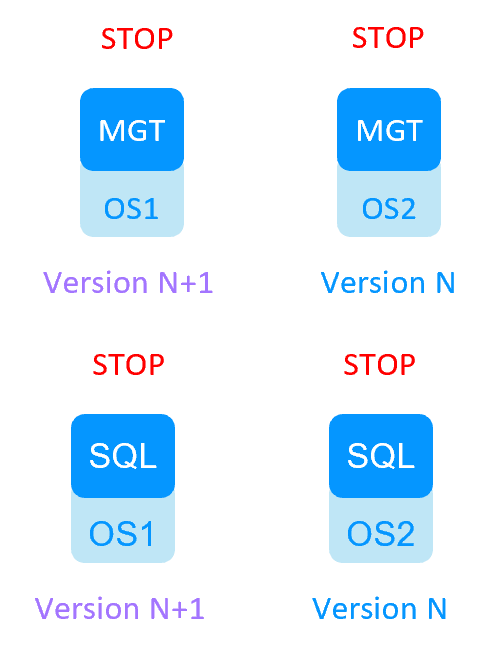

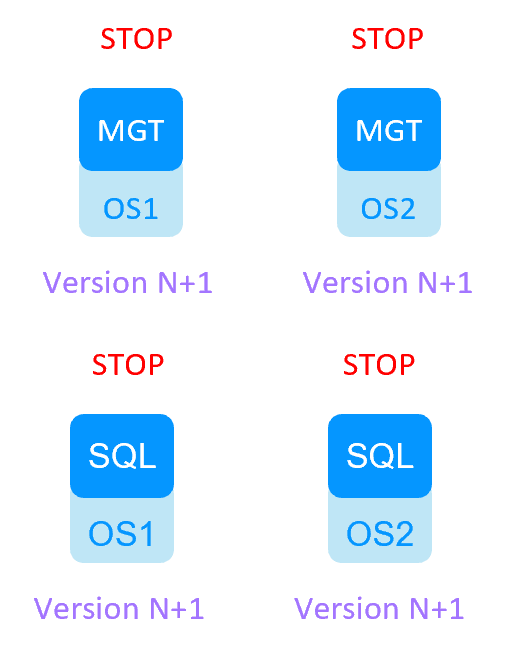

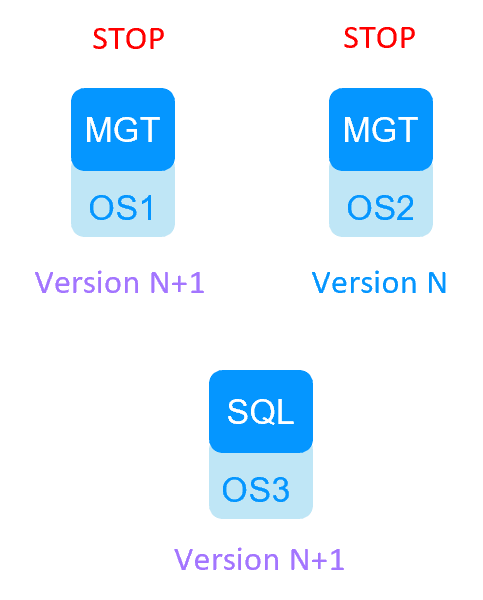

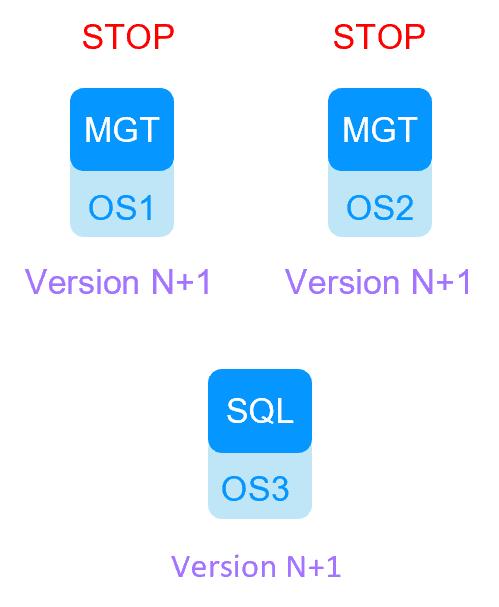

- Stop the ALONE SafeKit module: the cluster is in (MGT+SQL1-STOP, MGT+SQL2-STOP).

- Force start as prim the SafeKit module on MGT+SQL2 with the version N: use the SafeKit web console "Control tab/Expert/Force start/as prim".

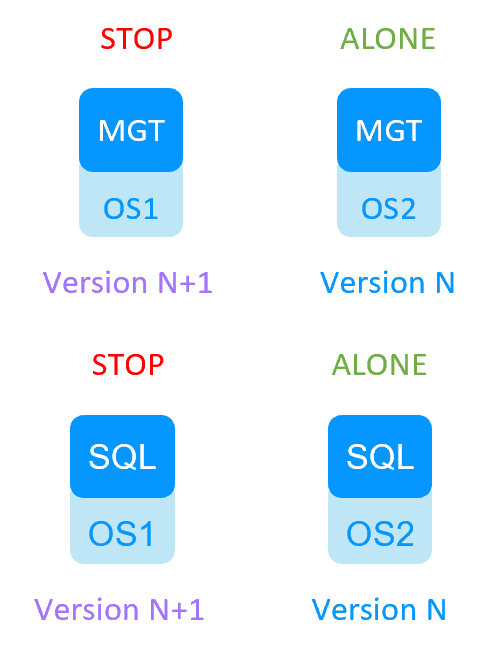

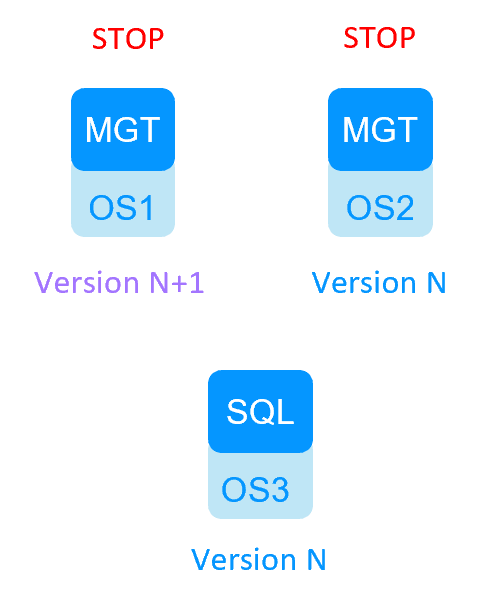

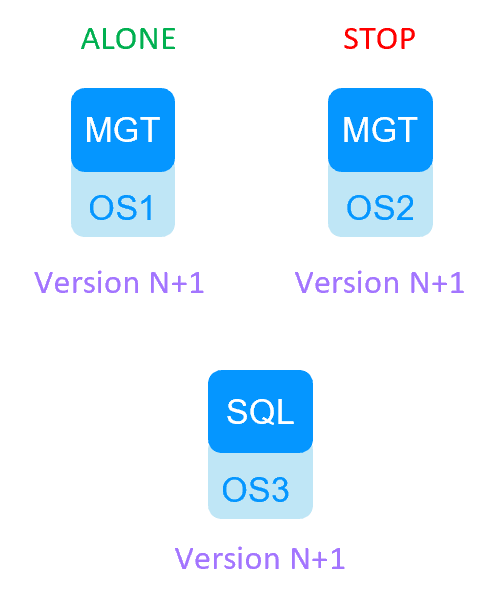

- The cluster is in (MGT+SQL1-STOP, MGT+SQL2-ALONE).

- Suspend the monitoring of processes during the migration on MGT+SQL2: use the SafeKit web console "Control tab/Admin/Errd suspend". Suspend also other checkers: "Control tab/Admin/Checker off".

- Migrate MGT+SQL2 from Milestone version N to Milestone N+1 with migration of the databases on node 2.

- Register the virtual IP of the SafeKit management cluster with the Server Configurator GUI.

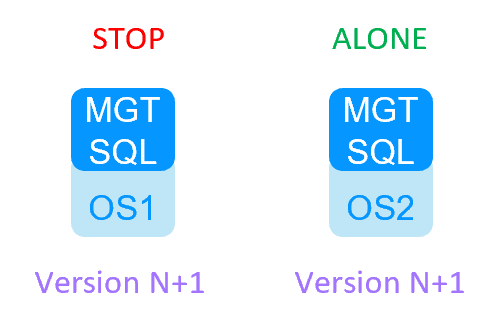

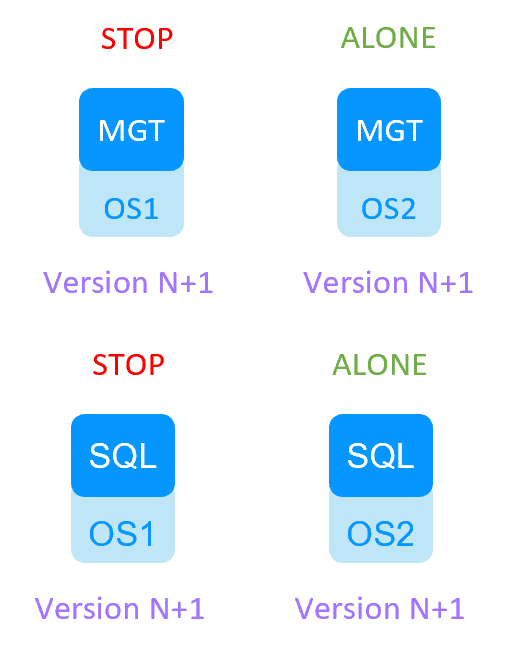

- At this step all nodes are migrated.

- Stop the SafeKit module on MGT+SQL2.

- The cluster is in (MGT+SQL1-STOP, MGT+SQL2-STOP).

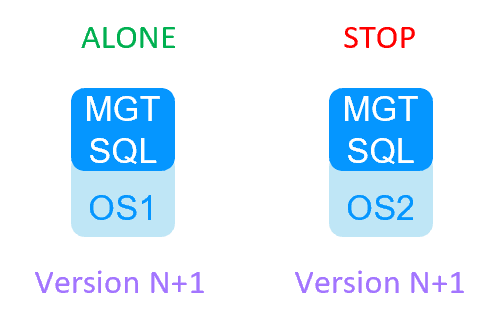

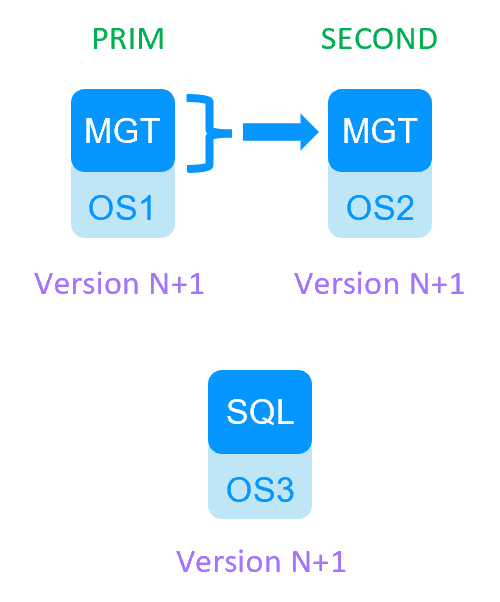

- Force start as prim the SafeKit module on MGT+SQL1 (if you want SQL1 to be the reference for the databases).

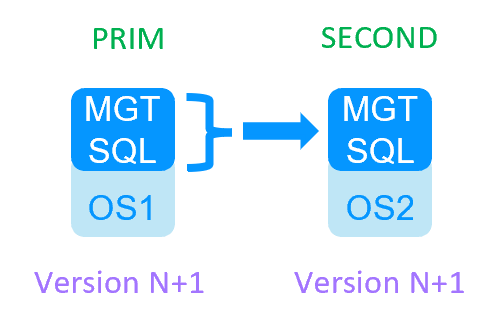

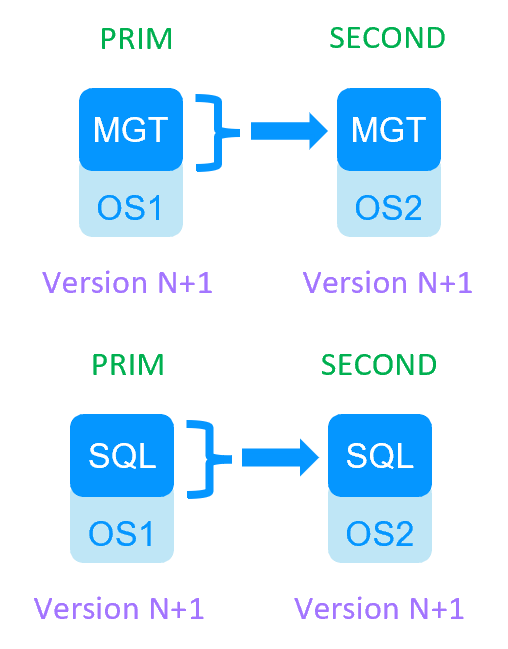

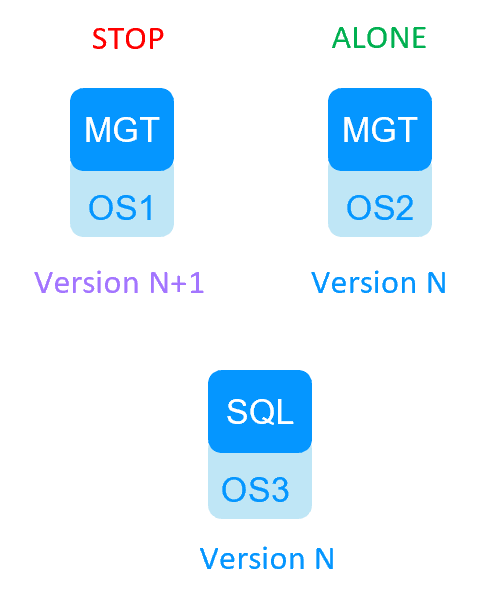

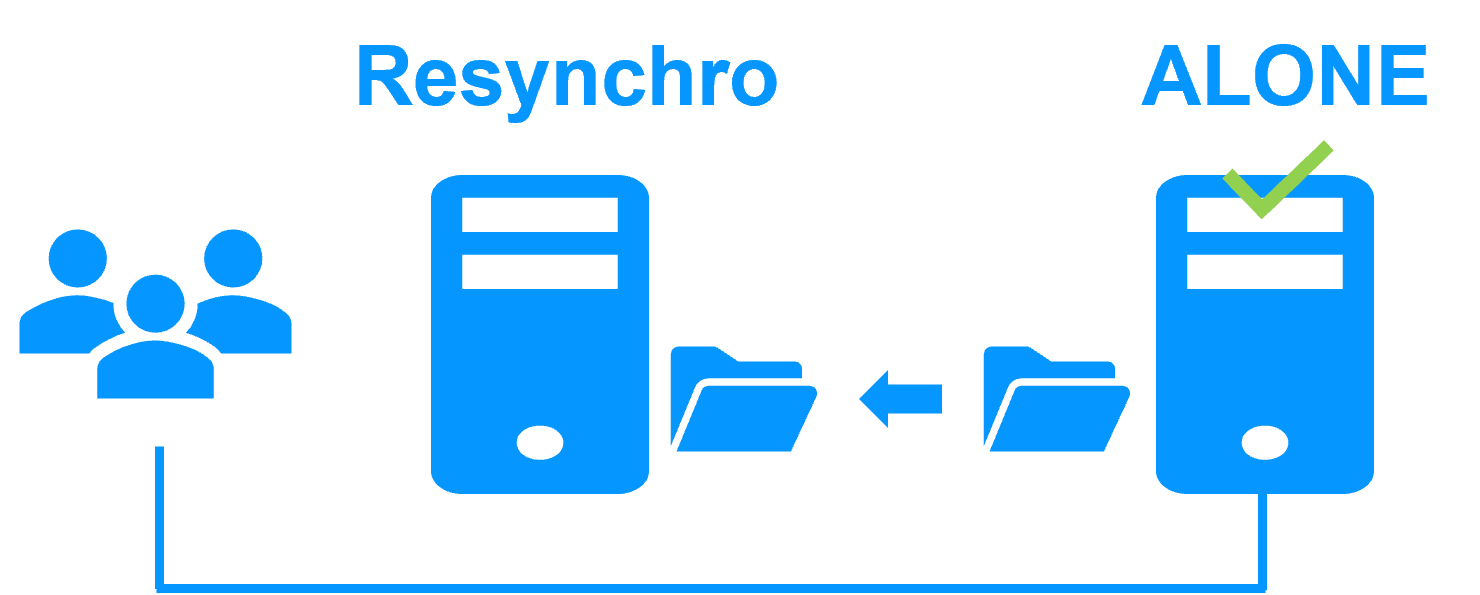

The cluster is in (MGT+SQL1-ALONE, MGT+SQL2-STOP). - Force start as second the SafeKit module MGT+SQL2.

The cluster is in (MGT+SQL1-PRIM, MGT+SQL2-SECOND); the SQL2 databases are resynchronized from SQL1. - Re-enable Automatic start at boot of the SafeKit module and resume the errd and checker monitoring.

- The cluster is restarted and migrated.

- Decide with the customer a service interruption to make the migration.

- Check in the Windows registry of both management nodes that the connection to the SQL databases is really external and connected to the virtual IP of the SafeKit SQL cluster. According this Milestone KB, the registry key for SQL connection is

HKEY_LOCAL_MACHINE\SOFTWARE\VideoOS\Server\ConnectionString. - Make a backup of the SQL databases on SQL1-PRIM for security. Default databases are according this Milestone KB: Surveillance, Surveillance_IDP, SurveillanceLogServerV2, Surveillance_IM.

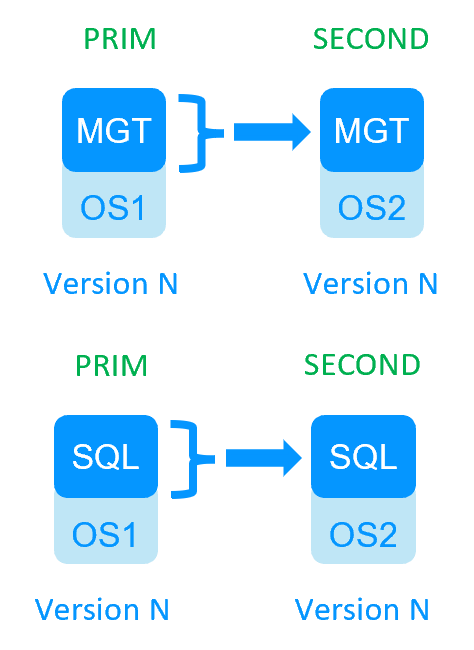

- Put the cluster in (MGT1+SQL1-PRIM, MGT2+SQL2-SECOND). Thus, the Milestone SQL databases are the same on both SQL nodes (if they are correctly configured for replication in the SafeKit SQL module).

- Protect the nodes from an automatic start of the SafeKit module at boot during the migration (if nodes are rebooted for any reason during the migration).

Use the SafeKit web console "Control tab/Admin/Configure boot start/Disable".

Do it for on the 4 nodes in the console. - Put the cluster in (MGT1+SQL1-ALONE, MGT2+SQL2-STOP): there is no more replication from SQL1 to SQL2.

- Suspend the monitoring of processes during the migration on MGT1: use the SafeKit web console "Control tab/Admin/Errd suspend". Suspend also other checkers: "Control tab/Admin/Checker off".

- Migrate MGT1+SQL1 from Milestone version N to Milestone version N+1 with migration of the databases on SQL1.

Note: the migration requires that the management services are running, that’s why the migration is made in the ALONE state without SafeKit checkers. - Register the virtual IP of the SafeKit management cluster (NOT the SQL virtual IP address) with the Server Configurator GUI.

- At this step, MGT1+SQL1 are migrated to Milestone version N+1 and MGT2+SQL2 are in version N.

- DO NOT START SQL2 as SECOND during the previous steps else it will resynchronize the migrated databases from SQL1 (that’s why we remove the automatic start at boot and make a backup for security reasons).

- Stop SafeKit on ALONE servers: the clusters are in (MGT1+SQL1-STOP, MGT2+SQL2-STOP).

- Force start as prim the SafeKit modules on MGT2+SQL2 with the version N: use the SafeKit web console "Control tab/Expert/Force start/as prim".

- The cluster is in (MGT1+SQL1-STOP, MGT2+SQL2-ALONE).

- Suspend the monitoring of processes during the migration on MGT2: use the SafeKit web console "Control tab/Admin/Errd suspend". Suspend also other checkers: "Control tab/Admin/Checker off".

- Migrate MGT2+SQL2 from Milestone version N to Milestone N+1 with migration of the databases on SQL2.

- Register the virtual IP of the SafeKit management cluster (NOT the SQL virtual IP address) with the Server Configurator GUI.

- At this step all nodes are migrated.

- Stop the SafeKit modules on MGT2+SQL2.

- The clusters are in (MGT1+SQL1-STOP, MGT2+SQL2-STOP).

- Force start as prim the SafeKit modules on MGT1+SQL1 (if you want SQL1 to be the reference for the databases).

The clusters are in (MGT1+SQL1-ALONE, MGT2+SQL2-STOP). - Force start as second the SafeKit modules on MGT2+SQL2.

The clusters are in (MGT1+SQL1-PRIM, MGT2+SQL2-SECOND); the SQL2 databases are resynchronized from SQL1. - Re-enable Automatic start at boot of the SafeKit modules and resume the errd and checker monitoring.

- The clusters are restarted and migrated.

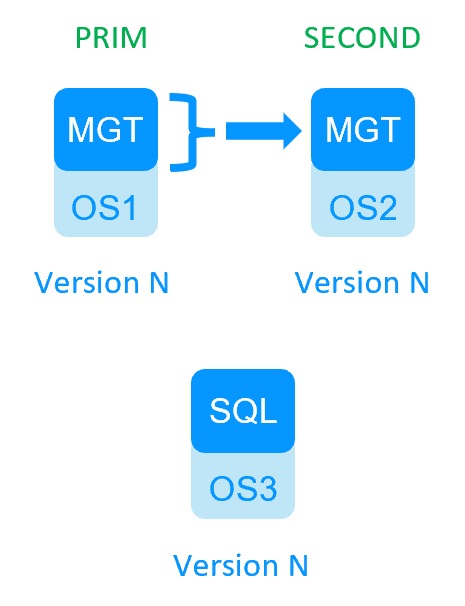

- Decide with the customer a service interruption to make the migration.

- Check in the Windows registry of both management nodes that the connection to the SQL databases is really external and the same for each node. According this Milestone KB, the registry key for SQL connection is

HKEY_LOCAL_MACHINE\SOFTWARE\VideoOS\Server\ConnectionString. - Make a backup of databases on the external SQL for security and also for step 11. Default databases are according this Milestone KB: Surveillance, Surveillance_IDP, SurveillanceLogServerV2, Surveillance_IM.

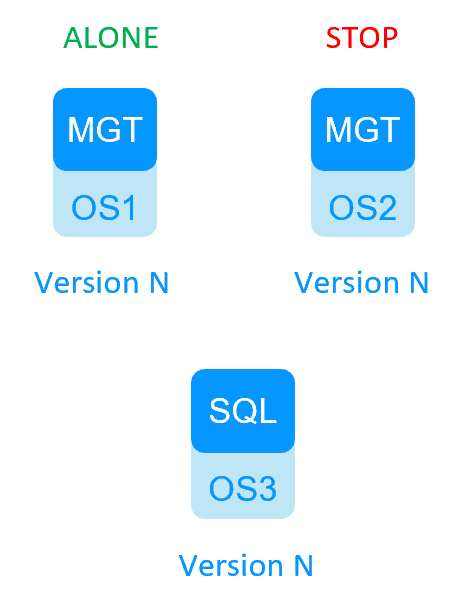

This step is mandatory for step 12. - Put the cluster in (MGT1-PRIM, MGT2-SECOND).

- Protect the SafeKit nodes from an automatic start of the SafeKit module at boot during the migration (if nodes are rebooted for any reason during the migration).

Use the SafeKit web console "Control tab/Admin/Configure boot start/Disable".

Do it for on both management nodes in the console. - Put the cluster in (MGT1-ALONE, MGT2-STOP).

- Suspend the monitoring of processes during the migration on MGT1: use the SafeKit web console "Control tab/Admin/Errd suspend". Suspend also other checkers: "Control tab/Admin/Checker off".

- Migrate MGT1 from Milestone version N to Milestone version N+1 with migration of the databases on the external SQL.

Note: the migration requires that the management services are running, that’s why the migration is made in the ALONE state without SafeKit checkers. - Register the virtual IP of the SafeKit management cluster (NOT the SQL IP address) with the Server Configurator GUI.

- At this step, MGT1+SQL are migrated to Milestone version N+1 and MGT2 is in version N.

- Stop the ALONE SafeKit module: the cluster is in (MGT1-STOP, MGT2-STOP) with the external database migrated to version N+1.

- To succeed the MGT2 migration, restore the backup of the database taken at step 3 (as a precaution before restoring, you can take a backup of the newly migrated SQL to version N+1). The cluster is in (MGT1-STOP, MGT2-STOP) with the external database restore to version N.

- Force start as prim the MGT2 SafeKit module with the version N: use the SafeKit web console "Control tab/Expert/Force start/as prim".

- On MGT2, if the management services do not restart after the databases restore, you may have to reset the Milestone IDP on MGT2 (reset it with the cmd

iisreset /restartand register the virtual IP of the SafeKit management cluster (NOT the SQL IP address) with the Server Configurator GUI). Check with Milestone support. - The cluster is in (MGT1-STOP, MGT2-ALONE).

- Suspend the monitoring of processes during the migration on MGT2: use the SafeKit web console "Control tab/Admin/Errd suspend". Suspend also other checkers: "Control tab/Admin/Checker off".

- Migrate MGT2 from Milestone version N to Milestone N+1 with migration of the databases on the external SQL.

- Register the virtual IP of the SafeKit management cluster (NOT the SQL IP address) with the Server Configurator GUI.

- At this step all nodes are migrated.

- Stop the SafeKit module on MGT2.

- The cluster is in (MGT1-STOP, MGT2-STOP).

- Force start as prim the SafeKit module on MGT1.

The cluster is in (MGT1-ALONE, MGT2-STOP). - Force start as second the SafeKit module on MGT2.

The cluster is in (MGT1-PRIM, MGT2-SECOND). - Re-enable Automatic start at boot of the SafeKit module and resume the errd and checker monitoring.

- The cluster is restarted and migrated.

Partners, the success with SafeKit

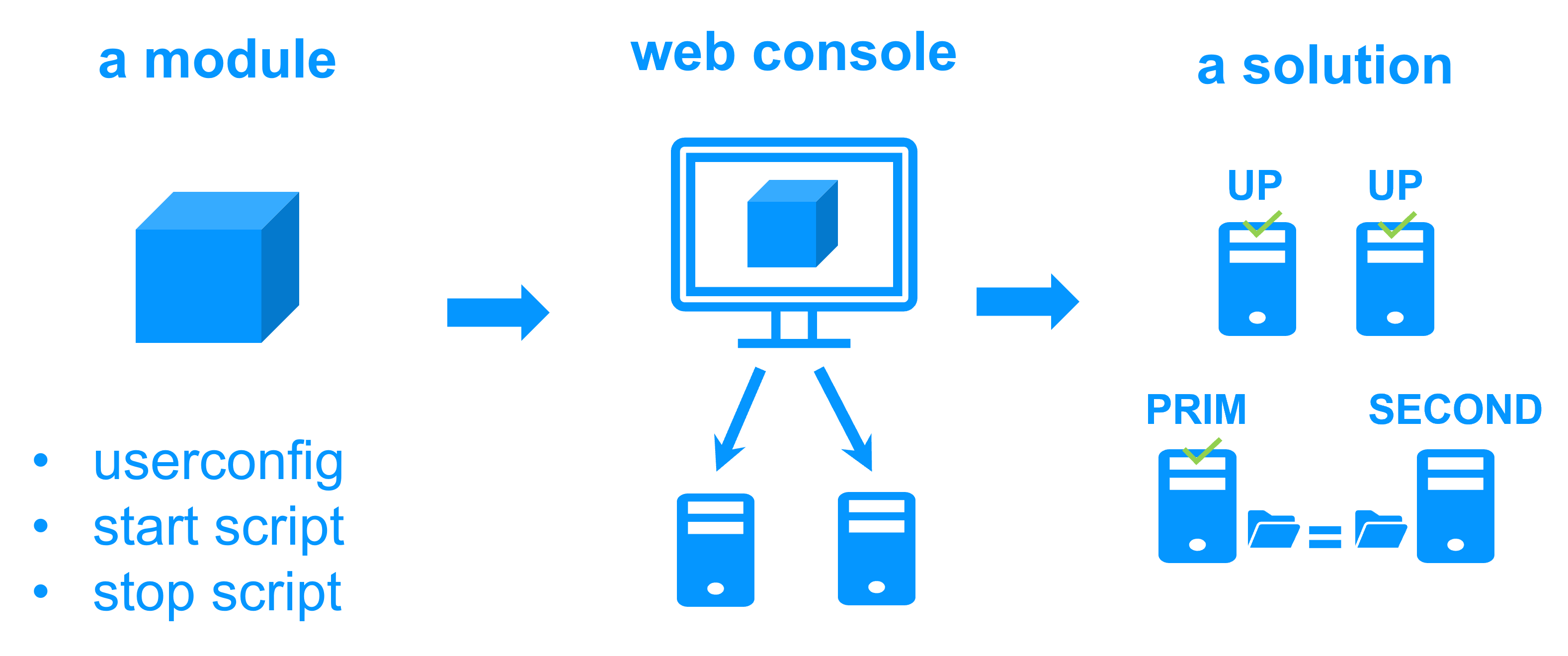

This platform agnostic solution is ideal for a partner reselling a critical application and who wants to provide a redundancy and high availability option easy to deploy to many customers.

With many references in many countries won by partners, SafeKit has proven to be the easiest solution to implement for redundancy and high availability of building management, video management, access control, SCADA software...

Building Management Software (BMS)

Video Management Software (VMS)

Electronic Access Control Software (EACS)

SCADA Software (Industry)

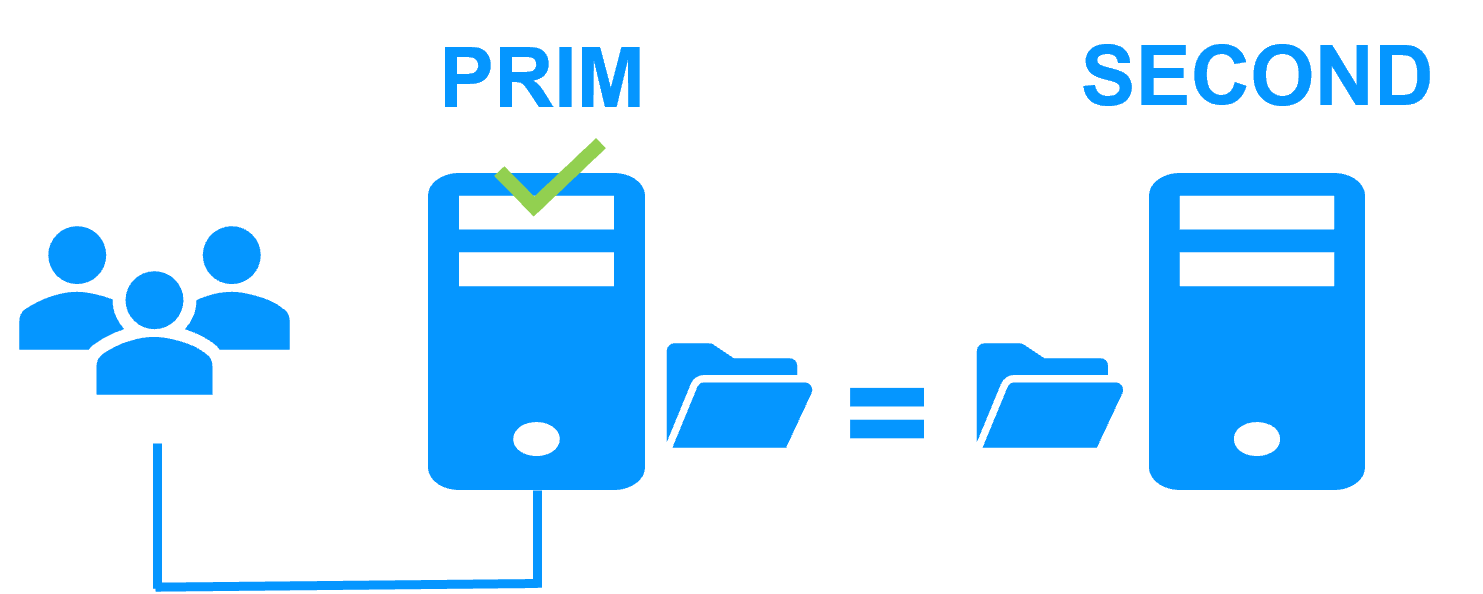

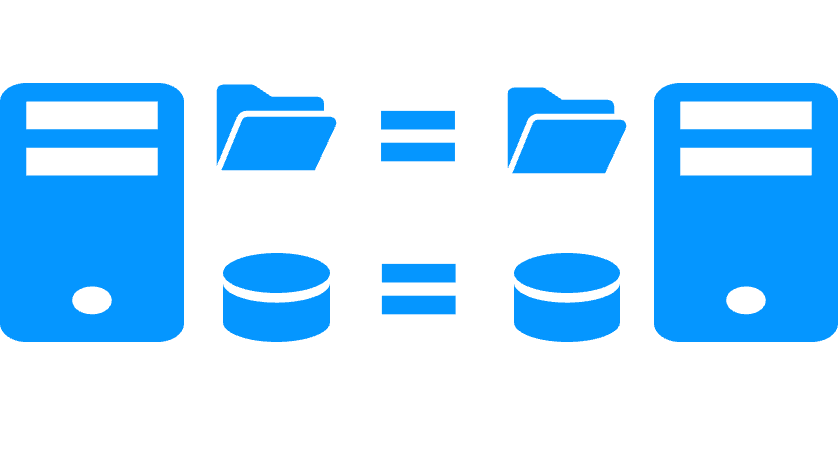

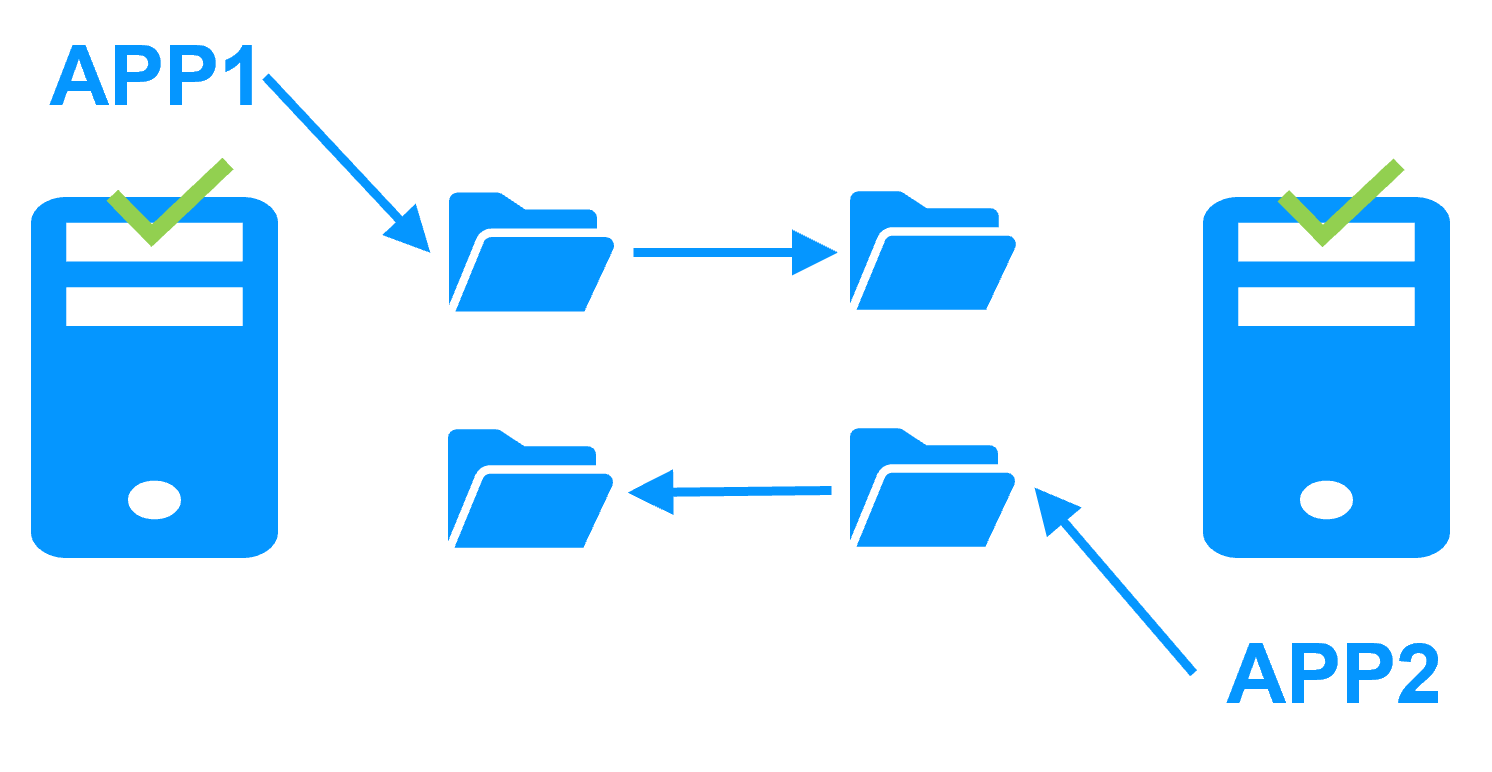

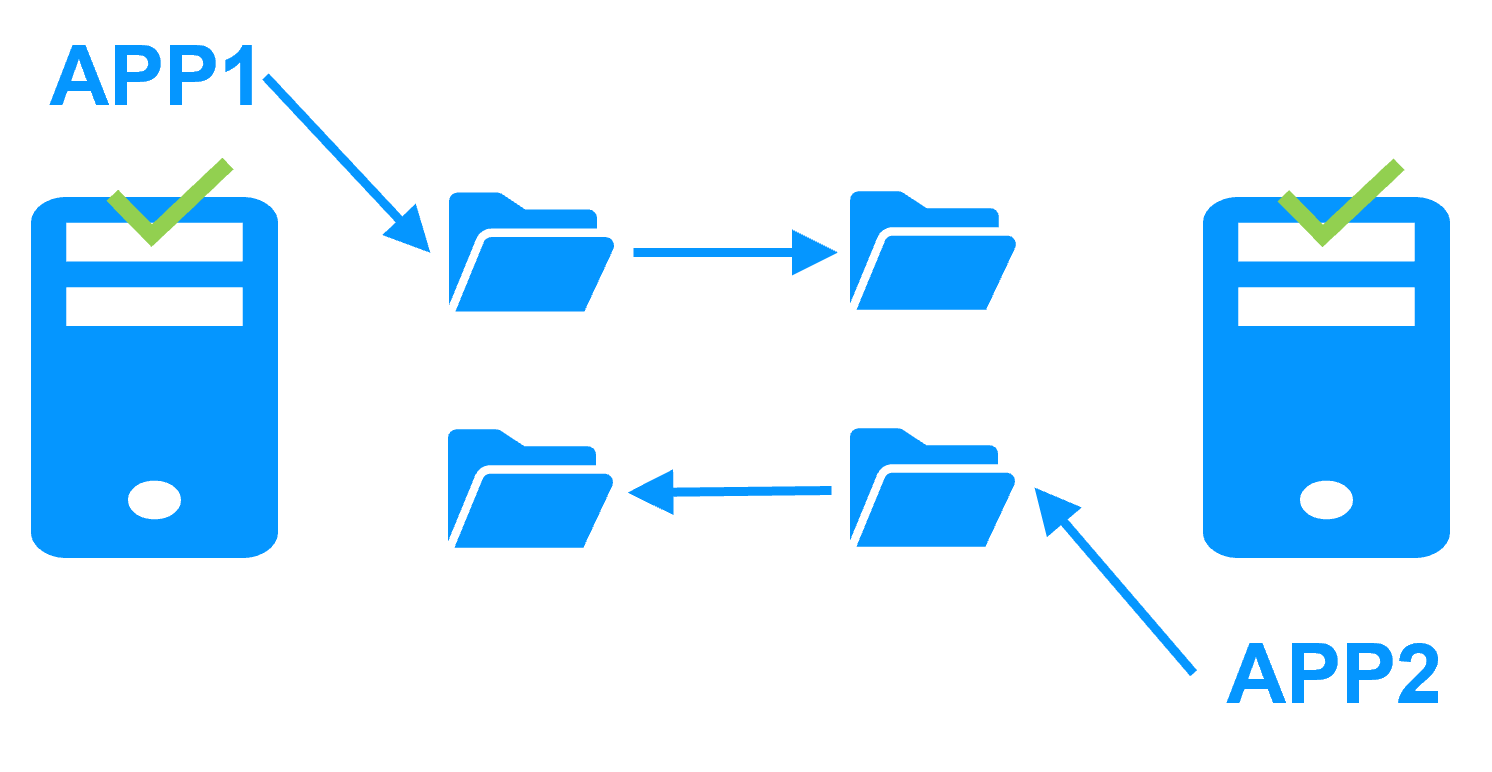

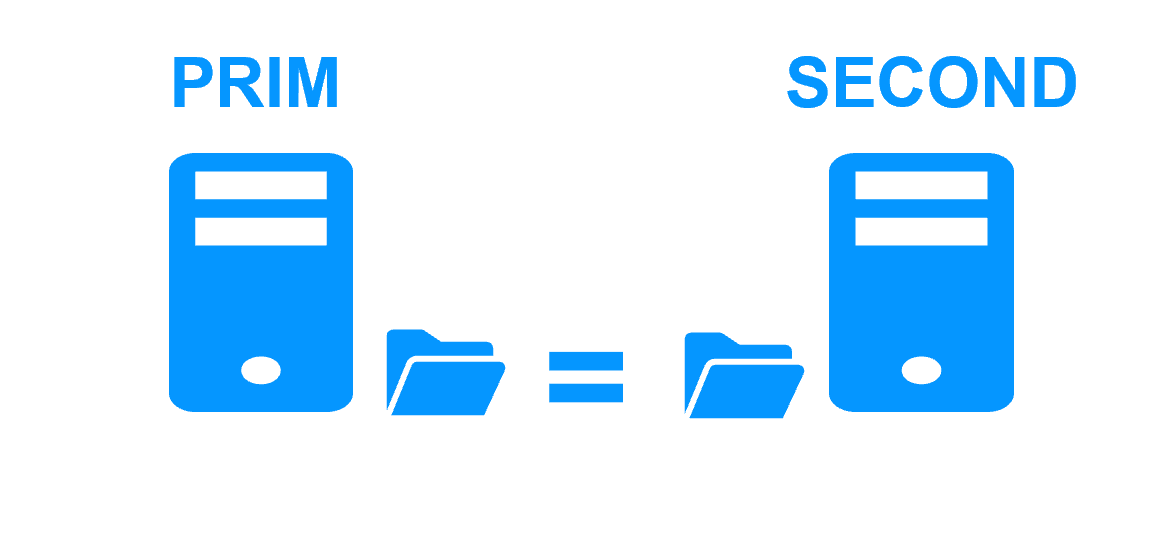

Step 1. Real-time replication

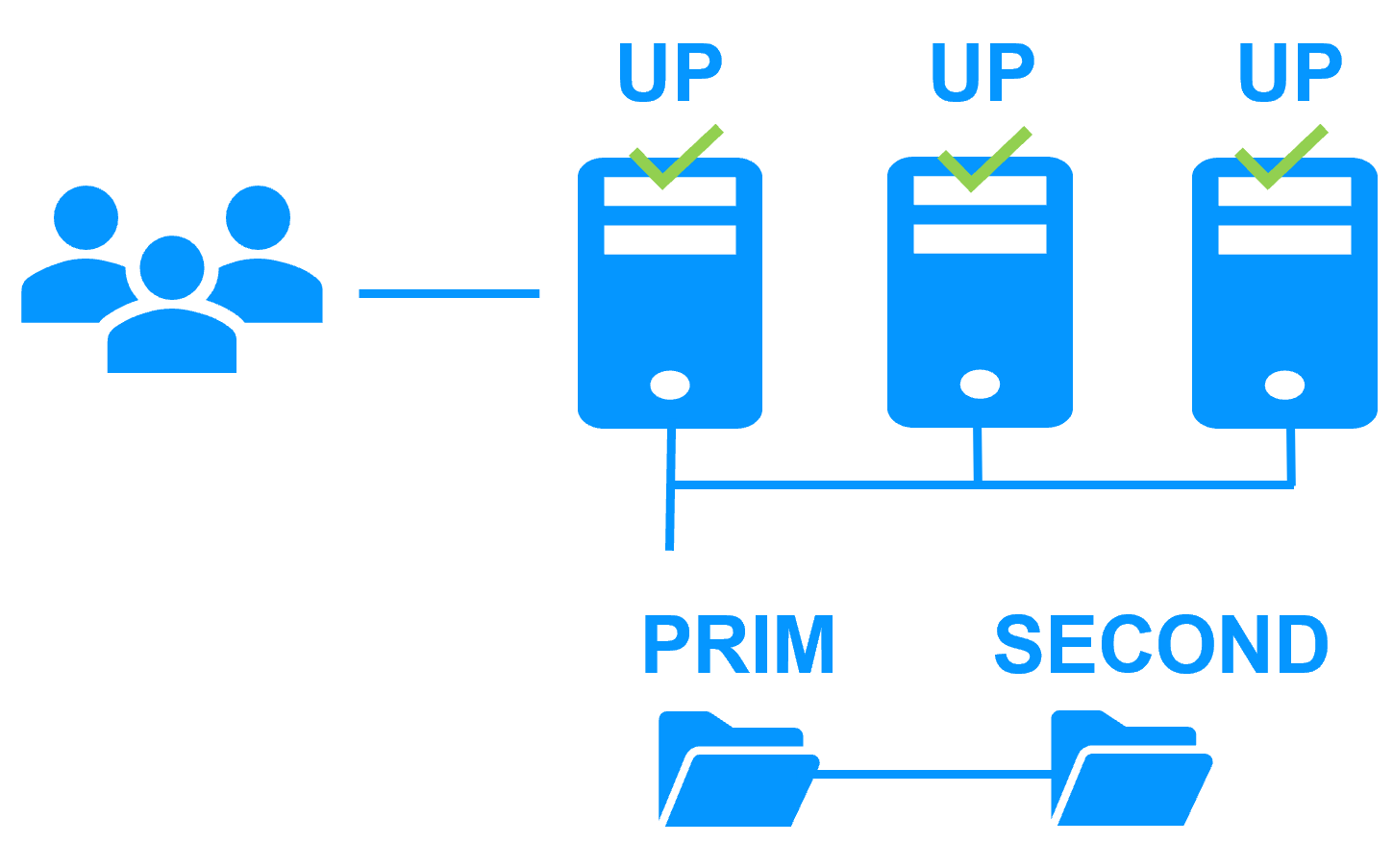

Server 1 (PRIM) runs the Windows or Linux application. Clients are connected to a virtual IP address. SafeKit replicates in real time modifications made inside files through the network.

The replication is synchronous with no data loss on failure contrary to asynchronous replication.

You just have to configure the names of directories to replicate in SafeKit. There are no pre-requisites on disk organization. Directories may be located in the system disk.

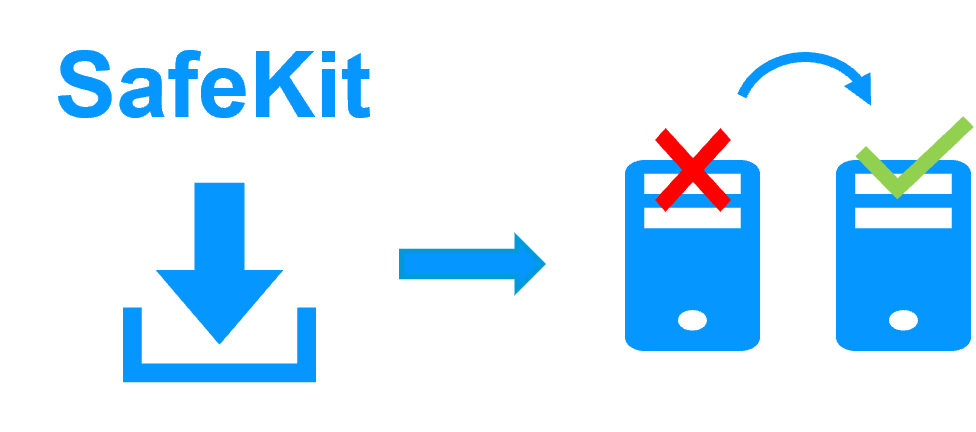

Step 2. Automatic failover

When Server 1 fails, Server 2 takes over. SafeKit switches the virtual IP address and restarts the Windows or Linux application automatically on Server 2.

The application finds the files replicated by SafeKit uptodate on Server 2. The application continues to run on Server 2 by locally modifying its files that are no longer replicated to Server 1.

The failover time is equal to the fault-detection time (30 seconds by default) plus the application start-up time.

Step 3. Automatic failback

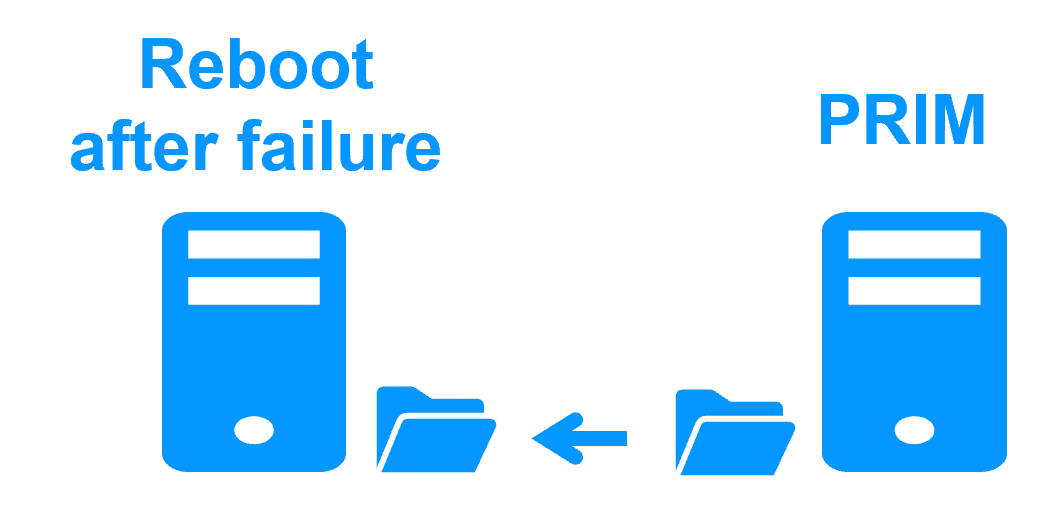

Failback involves restarting Server 1 after fixing the problem that caused it to fail.

SafeKit automatically resynchronizes the files, updating only the files modified on Server 2 while Server 1 was halted.

Failback takes place without disturbing the Windows or Linux application, which can continue running on Server 2.

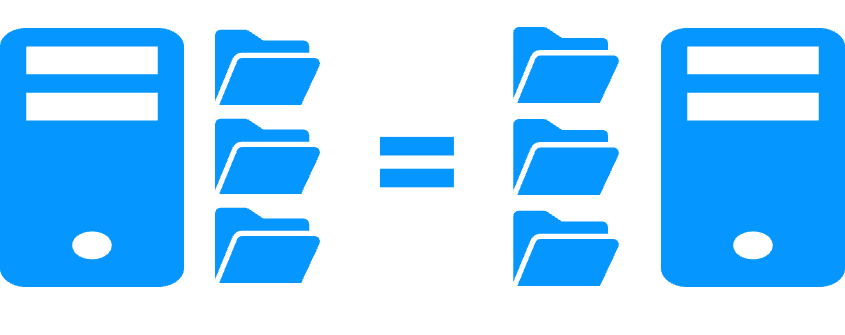

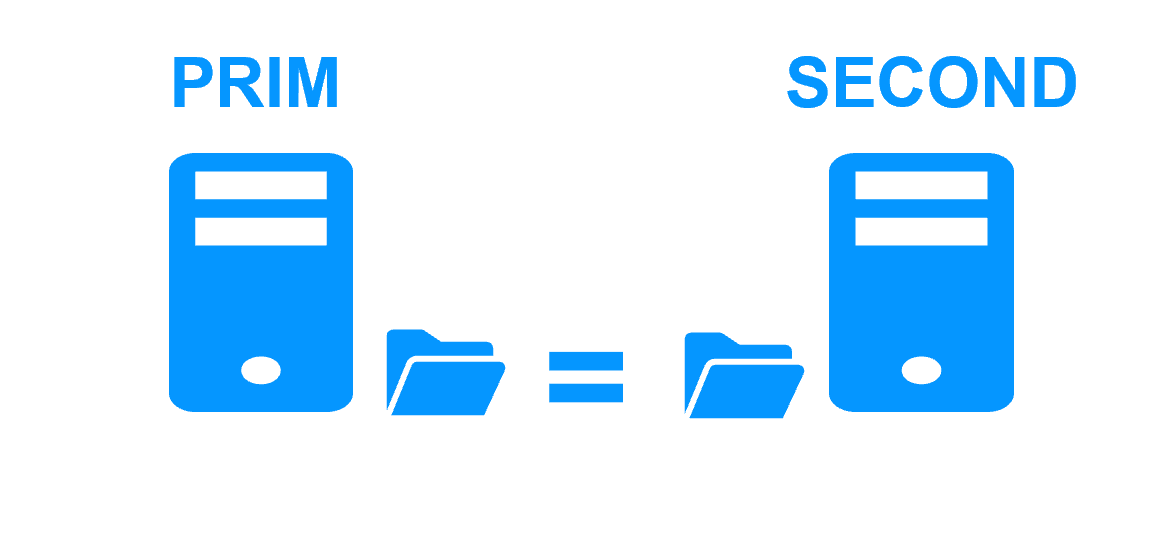

Step 4. Back to normal

After reintegration, the files are once again in mirror mode, as in step 1. The system is back in high-availability mode, with the Windows or Linux application running on Server 2 and SafeKit replicating file updates to Server 1.

If the administrator wishes the application to run on Server 1, he/she can execute a "swap" command either manually at an appropriate time, or automatically through configuration.

More information on power outage and network isolation in a cluster.

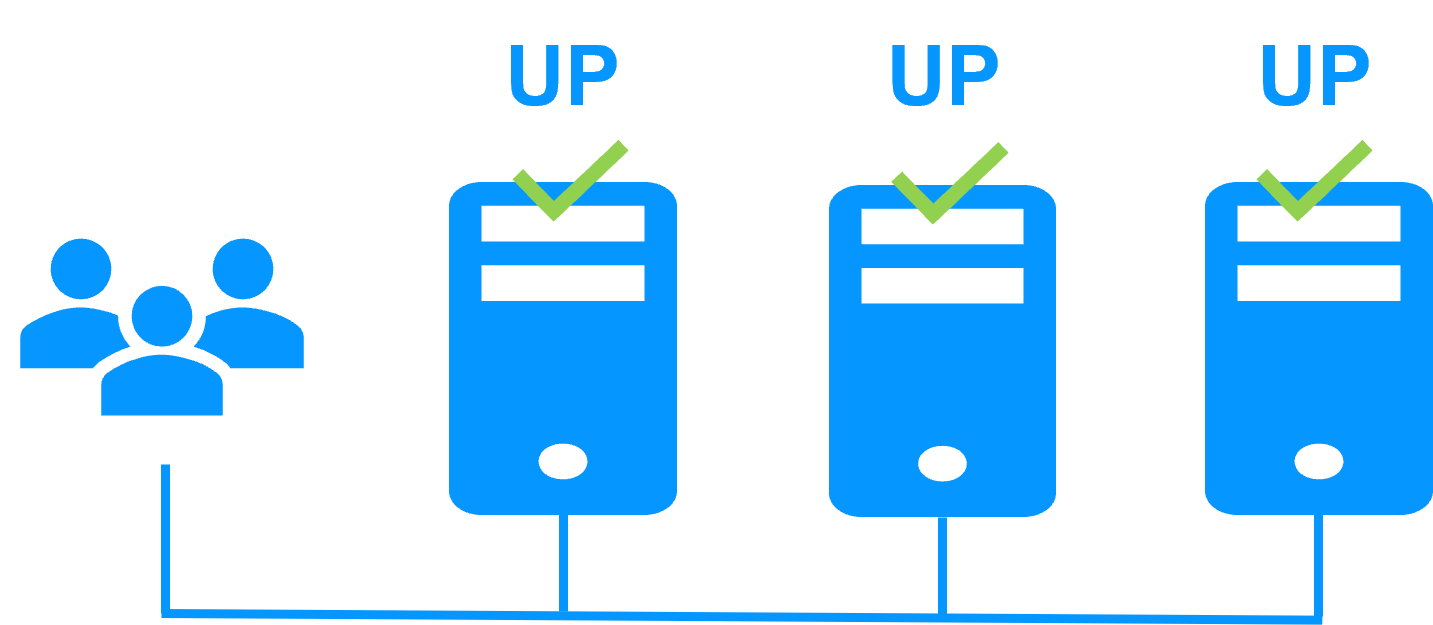

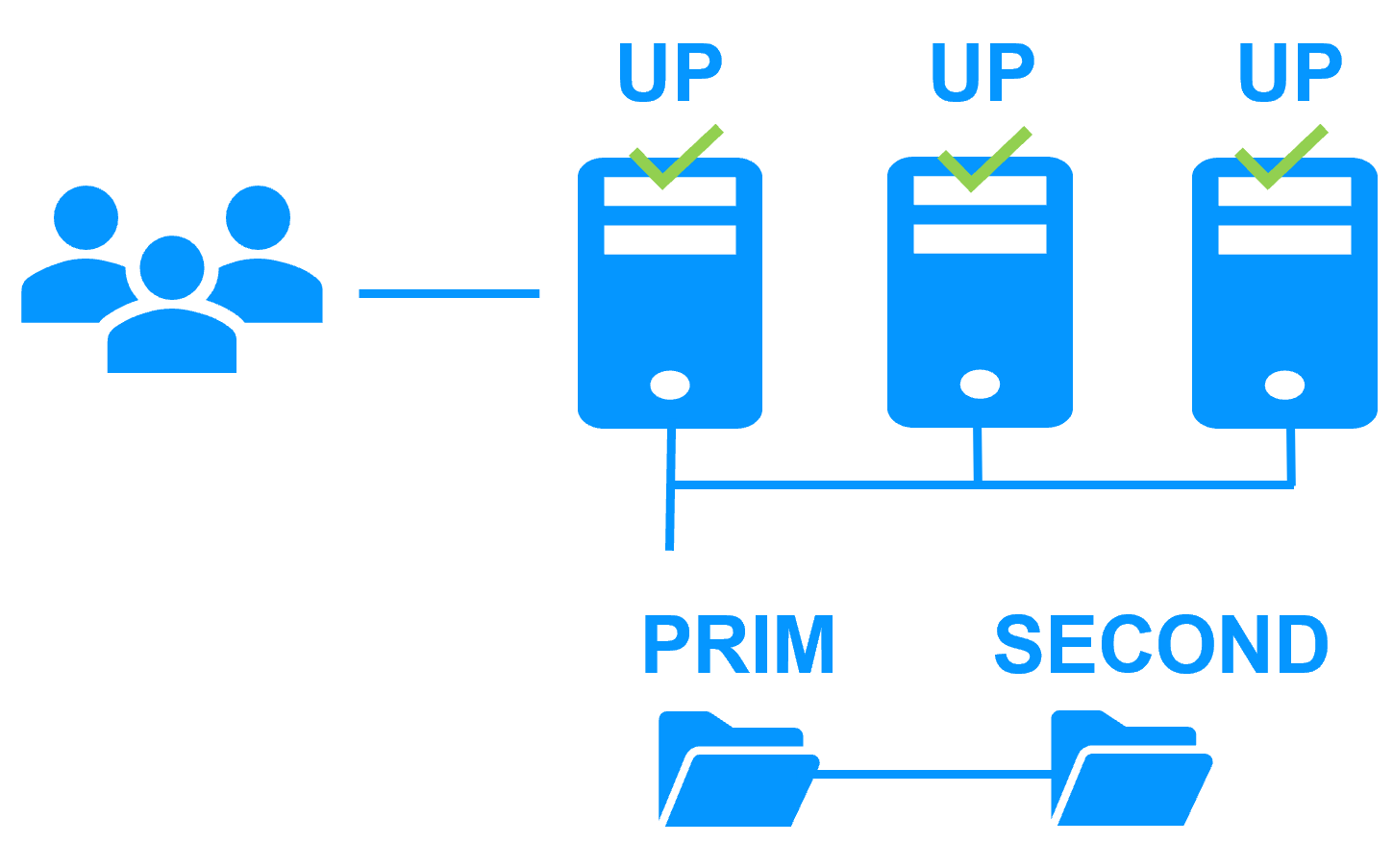

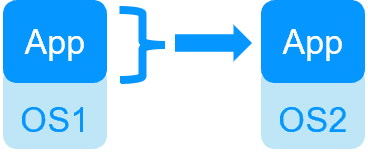

Redundancy at the application level

In this type of solution, only application data are replicated. And only the application is restared in case of failure.

With this solution, restart scripts must be written to restart the application.

We deliver application modules to implement redundancy at the application level (like the Windows or Linux module provided in the free trial below). They are preconfigured for well known applications and databases. You can customize them with your own services, data to replicate, application checkers. And you can combine application modules to build advanced multi-level architectures.

This solution is platform agnostic and works with applications inside physical machines, virtual machines, in the Cloud. Any hypervisor is supported (VMware, Hyper-V...).

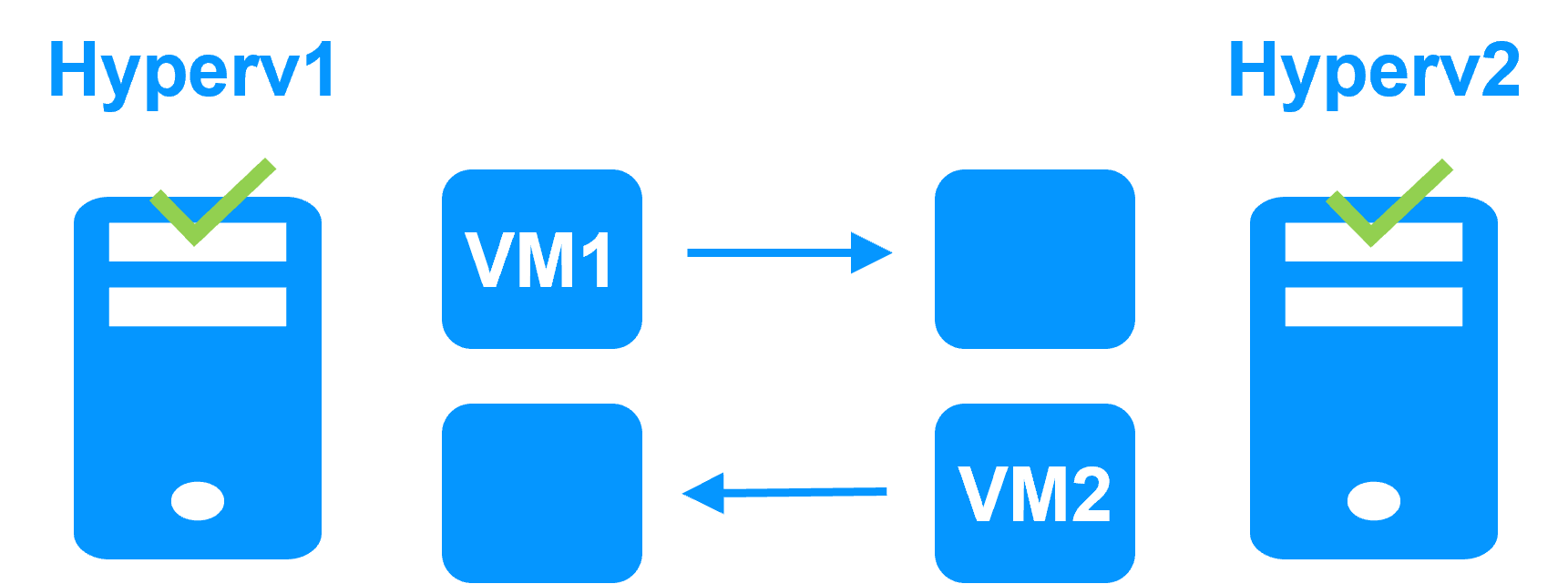

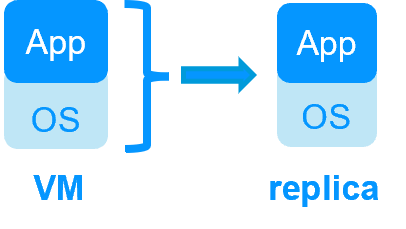

Redundancy at the virtual machine level

In this type of solution, the full Virtual Machine (VM) is replicated (Application + OS). And the full VM is restarted in case of failure.

The advantage is that there is no restart scripts to write per application and no virtual IP address to define. If you do not know how the application works, this is the best solution.

This solution works with Windows/Hyper-V and Linux/KVM but not with VMware. This is an active/active solution with several virtual machines replicated and restarted between two nodes.

- Solution for a new application (no restart script to write): Windows/Hyper-V, Linux/KVM

More comparison between VM HA vs Application HA

Why a replication of a few Tera-bytes?

Resynchronization time after a failure (step 3)

- 1 Gb/s network ≈ 3 Hours for 1 Tera-bytes.

- 10 Gb/s network ≈ 1 Hour for 1 Tera-bytes or less depending on disk write performances.

Alternative

- For a large volume of data, use external shared storage.

- More expensive, more complex.

Why a replication < 1,000,000 files?

- Resynchronization time performance after a failure (step 3).

- Time to check each file between both nodes.

Alternative

- Put the many files to replicate in a virtual hard disk / virtual machine.

- Only the files representing the virtual hard disk / virtual machine will be replicated and resynchronized in this case.

Why a failover ≤ 32 replicated VMs?

- Each VM runs in an independent mirror module.

- Maximum of 32 mirror modules running on the same cluster.

Alternative

- Use an external shared storage and another VM clustering solution.

- More expensive, more complex.

Why a LAN/VLAN network between remote sites?

- Automatic failover of the virtual IP address with 2 nodes in the same subnet.

- Good bandwidth for resynchronization (step 3) and good latency for synchronous replication (typically a round-trip of less than 2ms).

Alternative

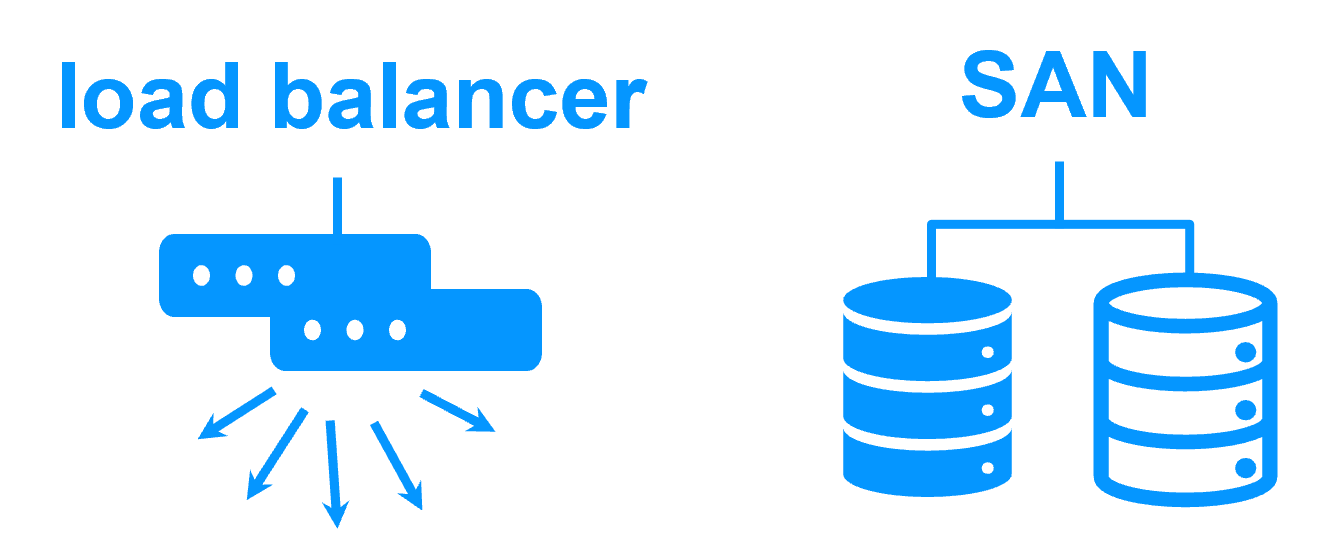

- Use a load balancer for the virtual IP address if the 2 nodes are in 2 subnets (supported by SafeKit, especially in the cloud).

- Use backup solutions with asynchronous replication for high latency network.

Network load balancing and failover |

|

| Windows farm | Linux farm |

| Generic Windows farm > | Generic Linux farm > |

| Microsoft IIS > | - |

| NGINX > | |

| Apache > | |

| Amazon AWS farm > | |

| Microsoft Azure farm > | |

| Google GCP farm > | |

| Other cloud > | |

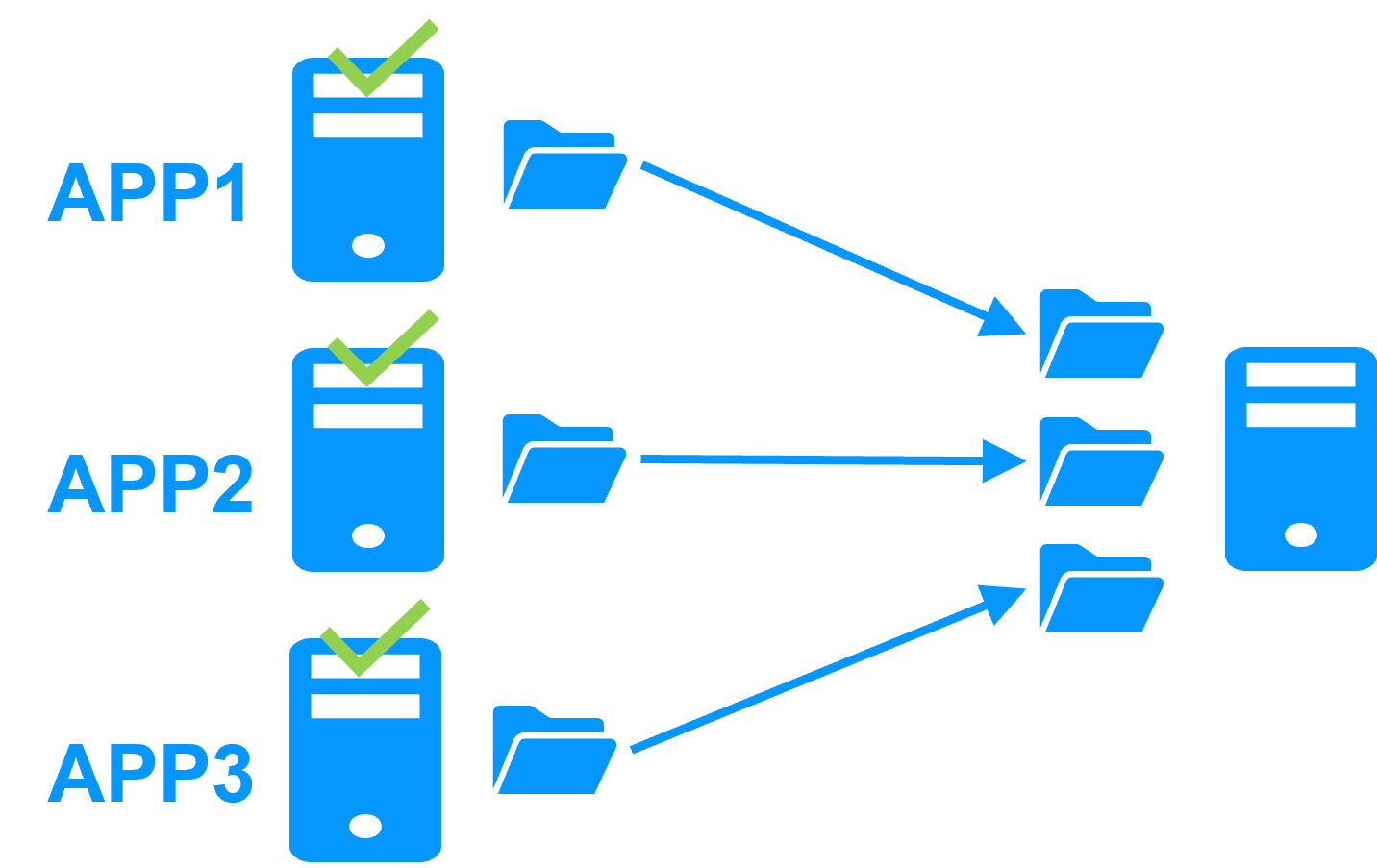

Advanced clustering architectures

Several modules can be deployed on the same cluster. Thus, advanced clustering architectures can be implemented:

- the farm+mirror cluster built by deploying a farm module and a mirror module on the same cluster,

- the active/active cluster with replication built by deploying several mirror modules on 2 servers,

- the Hyper-V cluster or KVM cluster with real-time replication and failover of full virtual machines between 2 active hypervisors,

- the N-1 cluster built by deploying N mirror modules on N+1 servers.

- Demonstration

- Examples of redundancy and high availability solution

- Evidian SafeKit sold in many different countries with Milestone

- 2 solutions: virtual machine cluster or application cluster

- Distinctive advantages

- More information on the web site

Evidian SafeKit mirror cluster with real-time file replication and failover |

|

|

3 products in 1 More info >  |

|

|

Very simple configuration More info >  |

|

|

Synchronous replication More info >  |

|

|

Fully automated failback More info >  |

|

|

Replication of any type of data More info >  |

|

|

File replication vs disk replication More info >  |

|

|

File replication vs shared disk More info >  |

|

|

Remote sites and virtual IP address More info >  |

|

|

Quorum and split brain More info >  |

|

|

Active/active cluster More info >  |

|

|

Uniform high availability solution More info >  |

|

|

RTO / RPO More info >  |

|

Evidian SafeKit farm cluster with load balancing and failover |

|

|

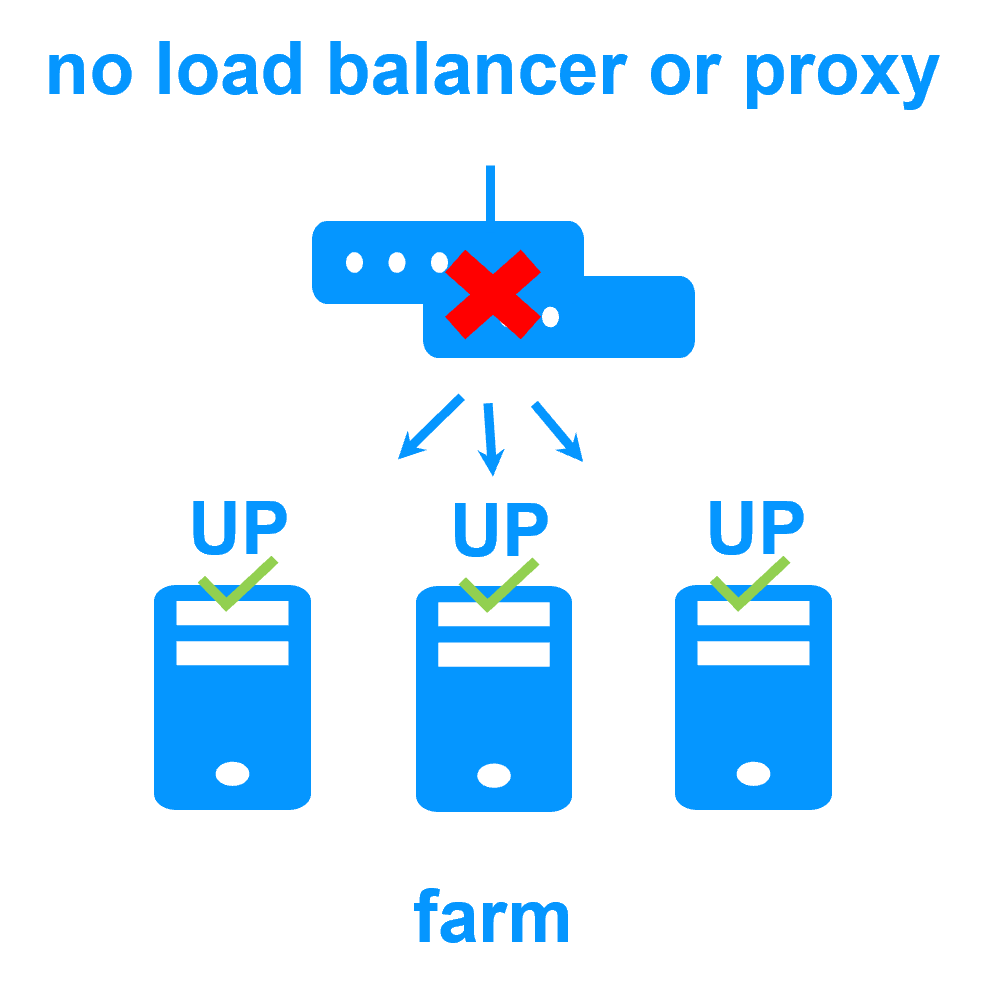

No load balancer or dedicated proxy servers or special multicast Ethernet address

|

|

|

All clustering features

|

|

|

Remote sites and virtual IP address

|

|

|

Uniform high availability solution

|

|

Software clustering vs hardware clustering

|

|

|

|

Shared nothing vs a shared disk cluster |

|

|

|

Application High Availability vs Full Virtual Machine High Availability

|

|

|

|

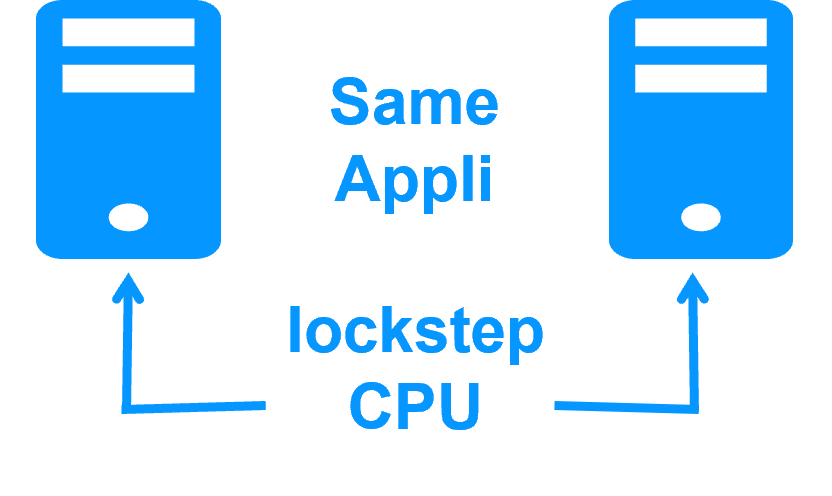

High availability vs fault tolerance

|

|

|

|

Synchronous replication vs asynchronous replication

|

|

|

|

Byte-level file replication vs block-level disk replication

|

|

|

|

Heartbeat, failover and quorum to avoid 2 master nodes

|

|

|

|

Virtual IP address primary/secondary, network load balancing, failover

|

|

|

|

Evidian SafeKit 8.2

All new features compared to SafeKit 7.5 described in the release notes

Packages

- Windows (with Microsoft Visual C++ Redistributable)

- Windows (without Microsoft Visual C++ Redistributable)

- Linux

- Supported OS and last fixes

One-month license key

Technical documentation

Training

Product information

Introduction

-

- Demonstration

- Examples of redundancy and high availability solution

- Evidian SafeKit sold in many different countries with Milestone

- 2 solutions: virtual machine or application cluster

- Distinctive advantages

- More information on the web site

-

- Cluster of virtual machines

- Mirror cluster

- Farm cluster

Installation, Console, CLI

- Install and setup / pptx

- Package installation

- Nodes setup

- Upgrade

- Web console / pptx

- Configuration of the cluster

- Configuration of a new module

- Advanced usage

- Securing the web console

- Command line / pptx

- Configure the SafeKit cluster

- Configure a SafeKit module

- Control and monitor

Advanced configuration

- Mirror module / pptx

- start_prim / stop_prim scripts

- userconfig.xml

- Heartbeat (<hearbeat>)

- Virtual IP address (<vip>)

- Real-time file replication (<rfs>)

- How real-time file replication works?

- Mirror's states in action

- Farm module / pptx

- start_both / stop_both scripts

- userconfig.xml

- Farm heartbeats (<farm>)

- Virtual IP address (<vip>)

- Farm's states in action

Troubleshooting

- Troubleshooting / pptx

- Analyze yourself the logs

- Take snapshots for support

- Boot / shutdown

- Web console / Command lines

- Mirror / Farm / Checkers

- Running an application without SafeKit

Support

- Evidian support / pptx

- Get permanent license key

- Register on support.evidian.com

- Call desk